Presenter: Dan Schofield, University of Oxford, UK

Schedule: THIS TUTORIAL IS CANCELLED

Audience:

The hands-on approach is designed for participants with no prior knowledge or technical experience, allowing them to gain experience in using open-source tools to annotate, identify and track animals from video, and use the output data for behavioural analysis.

Requirements:

Attendees will need a laptop, internet access (free Wi-Fi is available throughout the conference location) and to be signed up to a free google account with available storage beforehand if they would like to try running detection and tracking software.

Content:

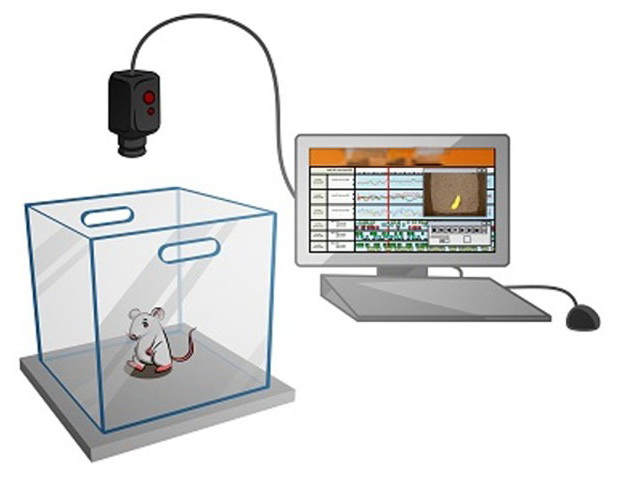

The use of video recordings is fundamental to research in behavioural science; however, expert manual annotation is both time-consuming and resource intensive. Machine learning offers the potential to rapidly analyse large scale video/audio datasets to increase the scale, depth and reproducibility of research, however these workflows are often not accessible to researchers without a background in computer science. This workshop will introduce attendees to the latest approaches and techniques in the field of computer vision, with a practical example of detection and tracking in video.

Schedule:

The workshop will begin with an overview of the main objectives, an introduction to basic concepts, and a demonstration of the latest computer vision techniques and tools for measuring behaviour. The second part of the workshop will allow participants to try using easily accessible annotation and visual tracking software to prepare training datasets and run detection and tracking models on their own data.

Measuring Behavior

Measuring Behavior